The important benefit of using an established connection and matching TCP packets to send a TTL-based probe is that such traffic is happily allowed through by many stateful firewalls and other defenses without further inspection (since it is related to an entry in the connection table).ĪSS is a Autonomous System Scanner. This is opposed to sending stray packets, as traceroute-type tools usually do.

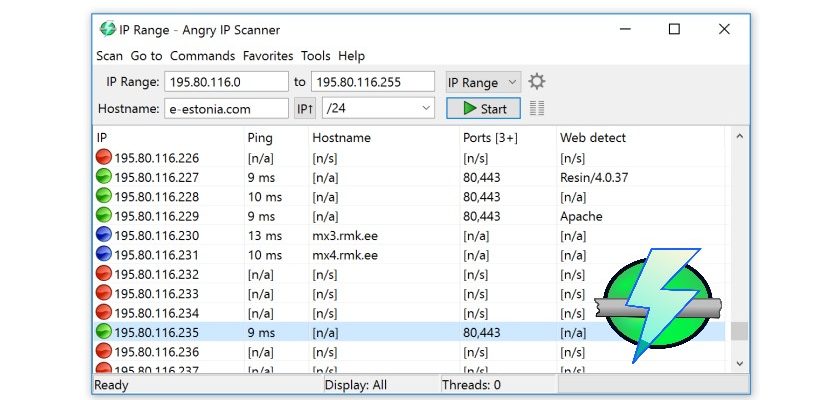

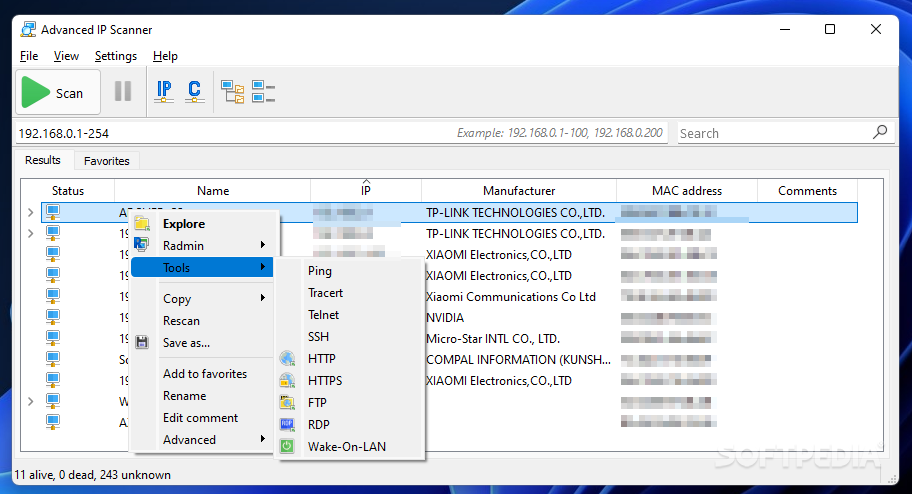

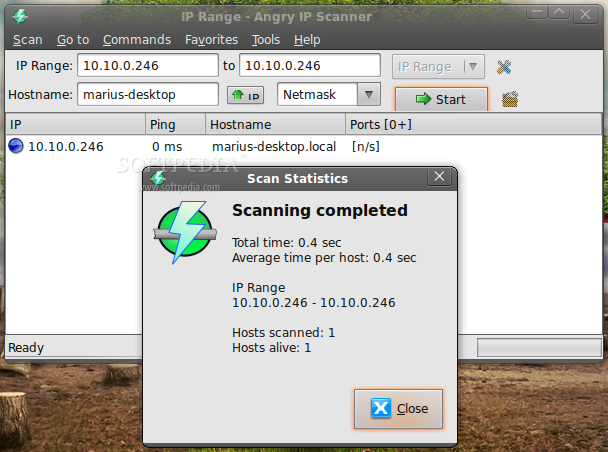

This tool enables the user to perform hop enumeration (“traceroute”) within an established TCP connection, such as a HTTP or SMTP session. Tools Found on BackTrack 3.0 Final (This wiki is still being updated for the latest release) Spark.eventLog.1 Tools Found on BackTrack 3.0 Final (This wiki is still being updated for the latest release)ġ.2.2 Angry IP Scanner (ipscan) 3.0-beta3ġ.2.20 ScanLine 1.01 (Windows Executable)ġ.2.25 UnicornScan pgsql 0.4.6e module version 1.03 For example, if the server was configured with a log directory of hdfs://namenode/shared/spark-logs, then the client-side options would be: true The spark jobs themselves must be configured to log events, and to log them to the same shared, writable directory. Moreover, you have to use and configuration properties to be able to view the logs of Spark applications once they're completed their execution. This creates a web interface at by default, listing incomplete and completed applications and attempts.Īs you could read, you have to start Spark History Server yourself to have 18080 available. You can start the history server by executing. It is still possible to construct the UI of an application through Spark’s history server, provided that the application’s event logs exist. Spark History Server is completely different from a Spark application. It is a separate process and may or may not be available regardless of availability of running Spark applications. Trying with the below address also didn't helped: 18080 is the default port of Spark History Server. Once it's completed, 4040 (which is the default port of a web UI) is no longer available.

That's the expected behaviour of a Spark application. I tried to access the Spark Web by: 10.0.2.15:4040 but the page is inaccessible. From now on, the web UI (at ) is no longer available. This is when a Spark application has finished (it does not really matter whether it finished properly or not). That's how Spark reports that the web UI (which is known as SparkUI internally) is bound to the port 4040.Īs long as the Spark application is up and running, you can access the web UI at. INFO Utils: Successfully started service 'SparkUI' on port 4040. #Angry ip scanner 2.15 codePS.: Knowning that my code is running succesfully. The result is as follow: Active connexion:Īctive local address Remote address state Ping statistics for 10.0.2.15: Packages: sent = 4, received = 0, lost = 4 (100% loss)Ĭhecked the availability of the port 4040 using netstat -a to verify which ports are available. Trying with the below address also didn't helped: Using ping 10.0.2.15 the result is: Send a request 'Ping' 10.0.2.15 with 32 bytes of data INFO SparkContext: Successfully stopped SparkContextġ0.0.2.15:4040 but the page is inaccessible.

INFO OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped! INFO BlockManagerMaster: BlockManagerMaster stopped INFO MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped! INFO SparkUI: Bound SparkUI to 0.0.0.0, and started at Īt the end of running it displays : INFO SparkUI: Stopped Spark web UI at #Angry ip scanner 2.15 driverwhen I'm running my Application it displays my driver having this address 10.0.2.15: INFO Utils: Successfully started service 'SparkUI' on port 4040. I'm running a Spark application locally of 4 nodes.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed